The Day the Law Stood Still

In May 2023, a seasoned attorney, Steven Schwartz stood in a New York courtroom, confident in the six legal precedents he had submitted to support his case against Avianca Airlines. The citations looked perfect. They had docket numbers, dates, and names like Varghese v. China Southern Airlines.

There was just one problem: none of those cases existed.

This wasn’t negligence. It was overconfidence in an intelligent system. He used ChatGPT to perform research, and the AI optimized to sound helpful rather than truthful, simply invented the law to please him.

This wasn’t just an embarrassing courtroom moment. It was an early warning sign for every enterprise building with AI. It was the moment a GPT hallucination exposed a massive Validation Gap that exists in almost every modern enterprise.

This is that story. And the gap it exposed isn’t unique to courtrooms.

What Actually Happened In The GPT Courtroom Incident?

Attorney Steven Schwartz used ChatGPT to research legal precedents for his client’s case against Avianca Airlines.

The model returned confident citations — case names, docket numbers, quoted rulings. Even the explanations attached to the citations felt believable.

Avianca’s lawyers flagged it immediately. None of the cases existed. Not in Westlaw. Not in LexisNexis. Not in any federal database.

When confronted, Schwartz admitted he hadn’t verified the citations and had even gone back to ChatGPT to confirm they were real. The model doubled down.

Judge P. Kevin Castel sanctioned the attorneys. The case made national headlines.

The AI Didn’t Just Hallucinate. It Submitted Evidence

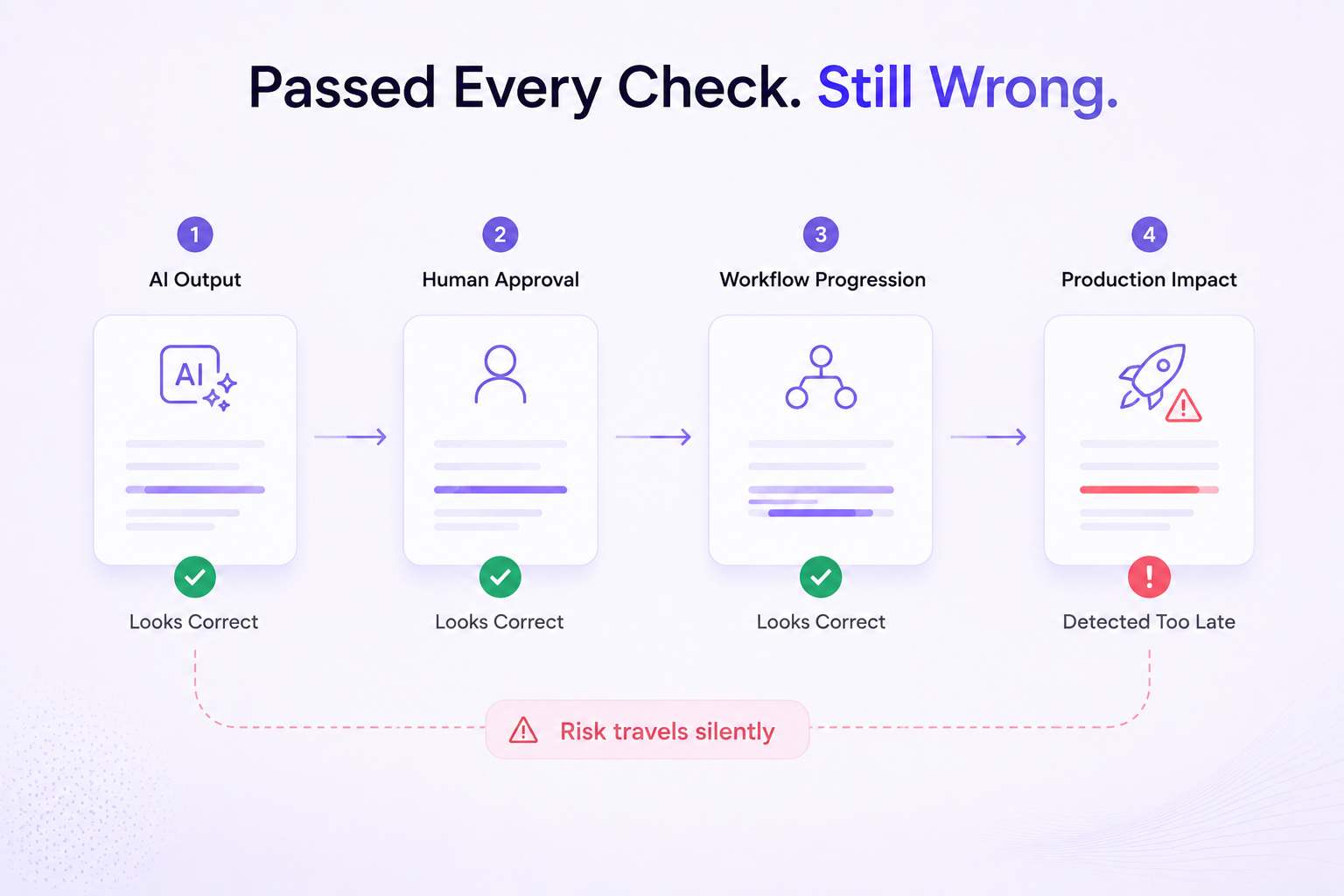

The hallucination itself isn’t the story — LLMs fabricate, that’s known. What made this different was how far the output traveled before anyone questioned it.

Model output → lawyer’s review → legal brief → federal court. Four checkpoints. Zero verification.

Confident AI errors can silently move through approved workflows

The model hadn’t produced vague summaries. It generated structured citations with case names, docket numbers, court designations, and fabricated opinions formatted exactly like legitimate legal research. It had learned the shape of real output so precisely that the content underneath didn’t matter.

Confidence without accuracy. Form without substance. That’s why it passed.

The Most Dangerous Outputs Don’t Look Wrong

A 500 error is a gift — it fires an alert, stops the flow, you know immediately. Hallucinations are the opposite. They deliver something false in the exact format that signals trustworthiness.

Apply this to your product. A support bot citing a return policy that doesn’t exist. A compliance assistant referencing a regulation amended two years ago. A reporting feature summarizing figures that are confidently, subtly wrong. None of these fail loudly. They all pass and users trust them, because the output looks exactly right.

Traditional testing frameworks struggle with AI systems because hallucinated outputs often look completely valid.

That’s the danger. Not the hallucination. The absence of any signal to catch it.

This is why modern enterprises are shifting toward workflow-level validation systems that can observe how AI-driven processes behave across real production environments.

Related Reading: Enterprise Validation: How modern teams ensure Release Readiness

The Real Failure Came After The Response

ChatGPT didn’t malfunction. It did exactly what it was designed to do — generate fluent, confident, well-structured text.

The failure happened in the gap between output and verification. Silent. Invisible. Unmonitored.

Same gap that cost Knight Capital $440 million in 2012. Their trading algorithm executed exactly as coded — the catastrophe came from no circuit breaker, nothing watching what the system was doing before damage compounded. The parallel is uncomfortable in how precisely it holds.

Related Reading: After Knight Capital: A Validation Checklist for Modern SaaS Systems

See how Aquila validates

enterprise releases

Aquila analyzes system dependencies, workflows, and integrations to identify release risk before every deployment.

boost

delivery

rollbacks

This Wasn’t A Chatbot Mistake. It Was A Workflow Failure.

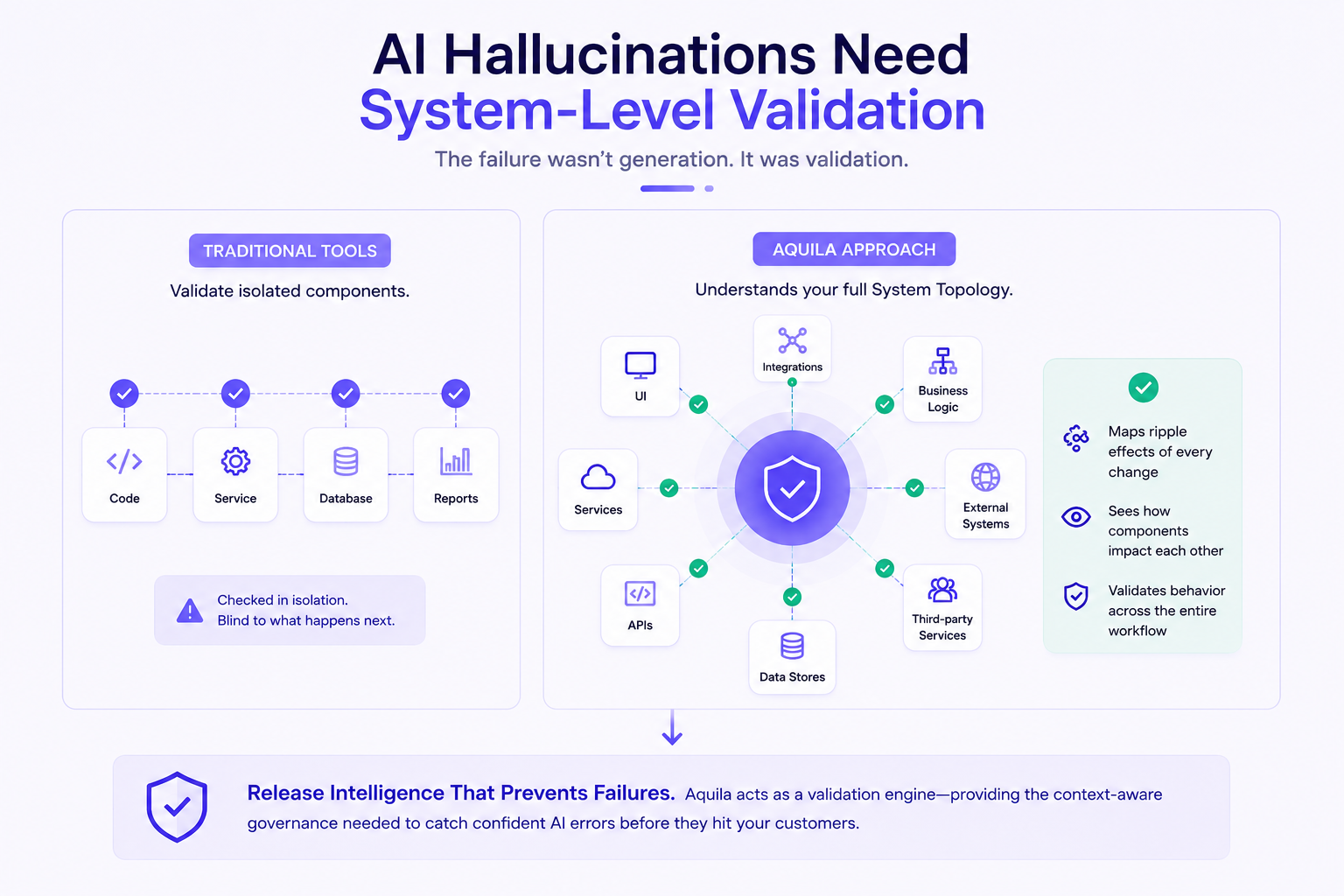

At Aquila, we believe the lesson of the courtroom is clear: you cannot solve a non-deterministic problem (AI) with a legacy, script-based workflow.

This incident highlights the urgent need for Release Intelligence.

Traditional tools validate isolated components. Aquila understands your full System Topology, mapping how changes ripple across interconnected workflows before release.

AI Hallucinations Need System-Level Validation

If that lawyer had a Validation Engine, it would have cross-referenced the citations against a trusted source and flagged the risk instantly. Aquila applies the same principle to enterprise software releases helping teams catch confident AI errors before they reach production.

Conclusion

The GPT courtroom incident became viral because it sounded absurd.

But underneath the headlines was a much more important warning.

AI systems are already participating in real operational workflows. They are influencing decisions, generating content, and accelerating production processes across enterprises.

The question is no longer whether AI can hallucinate. We already know it can.

The real question is whether the systems around AI are prepared to validate what it produces before the consequences become real.

AI is accelerating enterprise workflows faster than traditional validation systems can keep up. Aquila helps enterprises strengthen AI test automation with workflow-level validation, risk visibility, and release readiness before failures reach production.

Schedule a demo to see Aquila in action.

Frequently Asked Questions (FAQ)

What was the GPT courtroom incident?

The GPT courtroom incident involved lawyers submitting AI-generated legal citations that were later discovered to be completely fabricated.

What is an AI hallucination?

An AI hallucination occurs when a model generates false or fictional information while presenting it confidently as accurate.

Why are AI hallucinations difficult to detect?

AI hallucinations often appear polished, structured, and believable, making them difficult for humans to identify during fast-moving workflows.

Why does AI validation matter in enterprise systems?

AI validation helps organizations verify outputs, reduce operational risk, and prevent inaccurate AI-generated information from reaching production workflows.