Your CI dashboard is a sea of green. 1,500 tests passed, containers are healthy, and you hit “Deploy.” Ten minutes later, production is on fire.

The uncomfortable part? Not a single test failed.

So what exactly did your testing validate?

The break didn’t happen in your codebase. It emerged in the interaction layer—a shifted dependency, a subtle API contract change, or a service-to-service assumption that was never explicitly validated.

Most enterprise teams have lived this. The outage hurts, but the realization that everything was technically “validated” stings more. This is the breaking point for traditional quality strategies. They were built for monoliths and predictable cycles, but modern software is distributed, and much more complex.

The cost of this gap is staggering. Research from the Consortium for Information & Software Quality (CISQ) estimates that poor software quality costs the U.S. economy more than $2 trillion annually, driven largely by operational failures and system breakdowns.

In complex systems, passing tests doesn’t guarantee release readiness. The real question isn’t “Did the tests pass?” but “Is the system actually ready to ship?”

This is where many enterprises are beginning to rethink quality entirely — moving beyond test execution toward what some teams now call Enterprise Validation.

Here’s why traditional software quality strategies fail and why enterprises are shifting toward Enterprise Validation – a model that enables true Release Intelligence.

Traditional quality strategies were built for a different era

Traditional strategies weren’t “bad” by design. They were actually quite brilliant for the world they were built in. Back when software was a single, predictable monolith, these methods were the gold standard. You had one codebase, one release train, and one team that owned the whole thing.

For a long time, this worked. If the individual parts passed, we assumed the system would hold together. Traditional strategies were designed for systems with stable boundaries. Modern enterprise systems no longer fit that model.

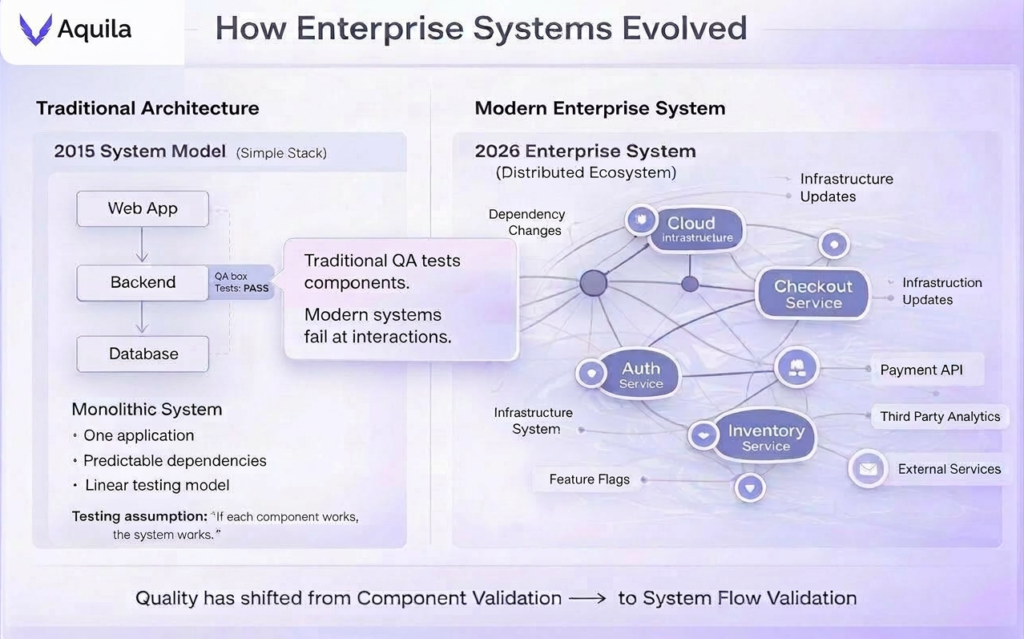

How Enterprise Systems Have Evolved

Modern systems are no longer contained. They are ecosystems of hundreds of interconnected services, APIs, and distributed layers. Here, a single change doesn’t just impact a feature—it ripples across the entire infrastructure, creating unpredictable side effects that traditional testing simply wasn’t built to catch.

Here is the reality of the 2026 enterprise landscape:

The shift from component-level QA to system-of-systems flow validation.

- From Monoliths to Distributed Mesh: Enterprise systems now run on hundreds of interconnected microservices, where a small change in one service can ripple across the entire system.

- The API Explosion: Modern applications depend on third-party APIs that evolve independently, meaning production behavior can change even when internal tests pass.

- The Velocity Gap: Continuous delivery and AI-assisted development push changes faster than traditional phase-based validation cycles can handle.

The result? Enterprise software has become a system-of-systems defined by workflow dependencies and service interactions. Here, failures rarely appear randomly. They cluster around critical workflows and dependency paths, where a small change can cascade across multiple services.

Here’s where the traditional QA model begins to break:

As complexity grows, teams add more tests but more volume doesn’t mean more confidence. The traditional model breaks in three places.

- The Maintenance Tax: Fragile, locator-based automation turns into overhead, forcing teams to spend more energy fixing scripts than improving quality.

- The State Paradox: Traditional tests validate isolated actions, but distributed systems fail through inconsistent underlying states that surface-level assertions miss.

- The Semantic Gap: Tests measure implementation success while critical business workflows quietly degrade.

Test results tell you what executed successfully. They don’t tell you whether the system is truly ready for release.

Why does this failure matter to the C-suite

When traditional quality strategies hit the wall, the fallout migrates from the engineering pipeline directly onto the balance sheet. For the C-suite, this creates a dangerous accountability gap: leadership becomes responsible for release decisions while remaining blind to the structural truth of the system.

Most organizations cannot answer the fundamental questions that define their stability:

- Where is our automation architecture fragile? (The Maintenance Liability)

- Which business workflows are most vulnerable to change? (The Revenue Risk)

- What will break if we modify a critical service or API? (The Dependency Ripple)

- Which flows must be prioritized before this specific release? (The Resource Blindspot)

- Where are our systems accidentally and dangerously coupled? (The Structural Flaw)

Instead of deterministic answers, leadership is often fed “Activity Signals”-test counts, coverage percentages, and green dashboards. This rarely explains where risk actually lives inside the system.

The Bottom Line: Real-world quality is measured by Structural Coverage. If your strategy doesn’t provide a single structural truth of your environment, it isn’t a validation model, it’s a simulation. Enterprise Validation replaces guesswork with measurable release readiness.

Beyond Automation: Introducing Enterprise Validation Strategy

The answer to failing QA isn’t “more automation”. Most enterprises are already drowning in tests that validate parts while ignoring the system. This is exactly why traditional software quality strategies fail in modern enterprises.

Enterprise Validation Strategy flips the script, moving the focus from code correctness to system-level confidence.

How Enterprise Validation Works in Practice

Enterprise validation focuses on three structural signals: critical workflows, risk concentration, and release readiness.

The strategy works by evaluating the systemic risk of a release through five non-negotiable capabilities:

- Risk-First Validation: Shifting focus from exhaustive “coverage” to high-impact areas where failure is most expensive.

- Flow-Aware Testing: Moving beyond isolated services to validate the “handshakes” and end-to-end journeys across APIs and third-party tools.

- Test Observability: Treating tests as sensors that provide high-resolution signals on system health rather than a simple Pass/Fail binary.

- Release Intelligence: Synthesizing test results with dependency health to provide a “weather report” of actual ecosystem stability.

- System-of-Systems Governance: Providing the bird’s-eye visibility needed to catch the “butterfly effect” before a single change disrupts the entire enterprise.

Traditional testing assumes that if every individual part works, the whole machine is fine. But in a mesh environment, that’s a dangerous bet. Failure almost always happens in the space between the components—the connections we didn’t map and the interactions we didn’t see coming.

We are no longer validating isolated code paths. We are validating connectivity and flow across the system. If your strategy doesn’t account for how risk travels across those invisible boundaries, you aren’t testing reality — you’re testing a fantasy. And this is the exact problem space Aquila was built for.

The Aquila difference: Engineering release confidence

For more than a decade, software quality has been optimized for one metric: execution speed.

In modern enterprise systems, speed is no longer the constraint. Visibility is. Faster execution without structural insight simply allows organizations to fail faster, and at scale.

Aquila was built to solve exactly this challenge.

Rather than treating tests as isolated checks, Aquila models how the system actually behaves. It maps the flow of real business workflows across services, APIs, and data layers, revealing the structural points where risk accumulates.

Testing activity becomes something far more valuable: release intelligence.

At the core of Aquila are three deterministic signals that transform fragmented testing data into a clear view of system stability:

- Execution — Capturing Workflow Reality: Aquila captures how revenue-critical workflows traverse UI, API, and database layers, mapping the handshakes that define your system-of-systems.

- Validation — Analyzing Structural Risk: Aquila identifies critical path nodes and surfaces risk concentration, revealing where dependencies cluster and where historical instability threatens production.

- Governance — Quantifying Release Readiness: Aquila synthesizes topology health and execution intelligence into a single deterministic signal, giving engineering leaders a measurable view of system stability before release.

Execution tells you what ran. Validation reveals what matters. Governance decides when it’s safe to ship. Aquila gives engineering leaders the visibility to make that decision with confidence.

Where Testing Ends and Release Governance Begins

In modern enterprise systems, testing is no longer the problem. Release decisions are.

In the modern enterprise, traditional ‘testing’ has become a routine technical checkpoint. Validation, however, has become a leadership responsibility. The gap between a passing test suite and a safe production system is where many digital initiatives quietly fail.

The real challenge is no longer running more tests. It is understanding where risk actually lives inside the system.

This is why enterprises are shifting from test execution to release intelligence. This shift marks the transition from traditional QA to Enterprise Validation and release governance.

Organizations that master this shift will move faster, release with greater confidence, and avoid the hidden fragility that slows most modern software teams.

Ready to see how Aquila helps engineering leaders turn structural visibility into measurable release readiness? Schedule a demo to explore Enterprise Validation in action.

Frequently Asked Questions (FAQ)

Why do traditional quality strategies fail in modern enterprises?

Traditional QA was designed for monoliths with stable, predictable boundaries. Modern enterprises use distributed microservices and third-party APIs where failures occur at the interaction points. Testing components in isolation fails to capture the risks created by these complex service dependencies.

What is Enterprise Validation Strategy?

It is a framework that prioritizes system-level behavior over component correctness. It uses flow-level testing, dependency mapping, and integrated signals to verify that end-to-end business processes remain functional across a distributed architecture.

Why is test coverage insufficient for modern software quality?

Code coverage only measures which lines of code were executed, it does not account for runtime variables, environmental shifts, or API contract changes. High coverage can still result in production failure if the interactions between services are not validated under load or change.

What is Release Intelligence?

Release Intelligence is a decision-making layer that synthesizes system topology, change impact analysis, and historical instability data. It provides a deterministic signal of release readiness based on actual system risk rather than a simple percentage of passing tests.

How do distributed systems change software validation?

Distributed systems introduce non-linear failure modes. Because services deploy independently and rely on external APIs, validation must move from checking static code to monitoring dynamic connectivity and the “flow” of data across service boundaries.

How can engineering teams achieve real release confidence?

Confidence is achieved by shifting from execution-based testing to intelligence-based validation. This requires mapping service dependencies, identifying high-risk impact areas, and validating the stability of critical business flows before a deployment starts.

References

Consortium for Information & Software Quality (CISQ). The Cost of Poor Software Quality in the U.S.

https://www.it-cisq.org/the-cost-of-poor-software-quality-in-the-us/